Caution

Buildbot no longer supports Python 2.7 on the Buildbot master.

This is the Buildbot documentation for Buildbot version 2.7.0.

If you are evaluating Buildbot and would like to get started quickly, start with the Tutorial. Regular users of Buildbot should consult the Manual, and those wishing to modify Buildbot directly will want to be familiar with the Developer’s Documentation.

Table Of Contents

Caution

Buildbot no longer supports Python 2.7 on the Buildbot master.

1. Buildbot Tutorial

Contents:

Caution

Buildbot no longer supports Python 2.7 on the Buildbot master.

1.1. First Run

1.1.1. Goal

This tutorial will take you from zero to running your first buildbot master and worker as quickly as possible, without changing the default configuration.

This tutorial is all about instant gratification and the five minute experience: in five minutes we want to convince you that this project works, and that you should seriously consider spending time learning the system. In this tutorial no configuration or code changes are done.

This tutorial assumes that you are running Unix, but might be adaptable to Windows.

Thanks to virtualenv, installing buildbot in a standalone environment is very easy. For those more familiar with Docker, there also exists a docker version of these instructions.

You should be able to cut and paste each shell block from this tutorial directly into a terminal.

1.1.2. Simple introduction to BuildBot

Before trying to run BuildBot it’s helpful to know what BuildBot is.

BuildBot is a continuous integration framework written in Python. It consists of a master daemon and potentially many worker daemons that usually run on other machines. The master daemon runs a web server that allows the end user to start new builds and to control the behaviour of the BuildBot instance. The master also distributes builds to the workers. The worker daemons connect to the master daemon and execute builds whenever master tells them to do so.

In this tutorial we will run a single master and a single worker on the same machine.

A more throughout explanation can be found in the manual section of the Buildbot documentation.

1.1.3. Getting ready

There are many ways to get the code on your machine. We will use the easiest one: via pip in a virtualenv. It has the advantage of not polluting your operating system, as everything will be contained in the virtualenv.

To make this work, you will need the following installed:

- Python and the development packages for it

- virtualenv

Preferably, use your distribution package manager to install these.

You will also need a working Internet connection, as virtualenv and pip will need to download other projects from the Internet. The master and builder daemons will need to be able to connect to github.com via HTTPS to fetch the repo we’re testing; if you need to use a proxy for this ensure that either the HTTPS_PROXY or ALL_PROXY environment variable is set to your proxy, e.g., by executing export HTTPS_PROXY=http://localhost:9080 in the shell before starting each daemon.

Note

Buildbot does not require root access. Run the commands in this tutorial as a normal, unprivileged user.

1.1.4. Creating a master

The first necessary step is to create a virtualenv for our master. We will also use a separate directory to demonstrate the distinction between a master and worker:

mkdir -p ~/tmp/bb-master

cd ~/tmp/bb-master

On Python 3:

python3 -m venv sandbox

source sandbox/bin/activate

Now that we are ready, we need to install buildbot:

pip install --upgrade pip

pip install 'buildbot[bundle]'

Now that buildbot is installed, it’s time to create the master:

buildbot create-master master

Buildbot’s activity is controlled by a configuration file. Buildbot by default uses configuration from file at master.cfg. Buildbot comes with a sample configuration file named master.cfg.sample. We will use the sample configuration file unchanged:

mv master/master.cfg.sample master/master.cfg

Finally, start the master:

buildbot start master

You will now see some log information from the master in this terminal. It should end with lines like these:

2014-11-01 15:52:55+0100 [-] BuildMaster is running

The buildmaster appears to have (re)started correctly.

From now on, feel free to visit the web status page running on the port 8010: http://localhost:8010/

Our master now needs (at least) a worker to execute its commands. For that, head on to the next section!

1.1.5. Creating a worker

The worker will be executing the commands sent by the master. In this tutorial, we are using the buildbot/hello-world project as an example. As a consequence of this, your worker will need access to the git command in order to checkout some code. Be sure that it is installed, or the builds will fail.

Same as we did for our master, we will create a virtualenv for our worker next to the other one. It would however be completely ok to do this on another computer - as long as the worker computer is able to connect to the master one:

mkdir -p ~/tmp/bb-worker

cd ~/tmp/bb-worker

On Python 2:

virtualenv --no-site-packages sandbox

source sandbox/bin/activate

On Python 3:

python3 -m venv sandbox

source sandbox/bin/activate

Install the buildbot-worker command:

pip install --upgrade pip

pip install buildbot-worker

# required for `runtests` build

pip install setuptools-trial

Now, create the worker:

buildbot-worker create-worker worker localhost example-worker pass

Note

If you decided to create this from another computer, you should replace localhost with the name of the computer where your master is running.

The username (example-worker), and password (pass) should be the same as those in master/master.cfg; verify this is the case by looking at the section for c['workers']:

cat ../bb-master/master/master.cfg

And finally, start the worker:

buildbot-worker start worker

Check the worker’s output. It should end with lines like these:

2014-11-01 15:56:51+0100 [-] Connecting to localhost:9989

2014-11-01 15:56:51+0100 [Broker,client] message from master: attached

The worker appears to have (re)started correctly.

Meanwhile, from the other terminal, in the master log (twisted.log in the master directory), you should see lines like these:

2014-11-01 15:56:51+0100 [Broker,1,127.0.0.1] worker 'example-worker' attaching from IPv4Address(TCP, '127.0.0.1', 54015)

2014-11-01 15:56:51+0100 [Broker,1,127.0.0.1] Got workerinfo from 'example-worker'

2014-11-01 15:56:51+0100 [-] bot attached

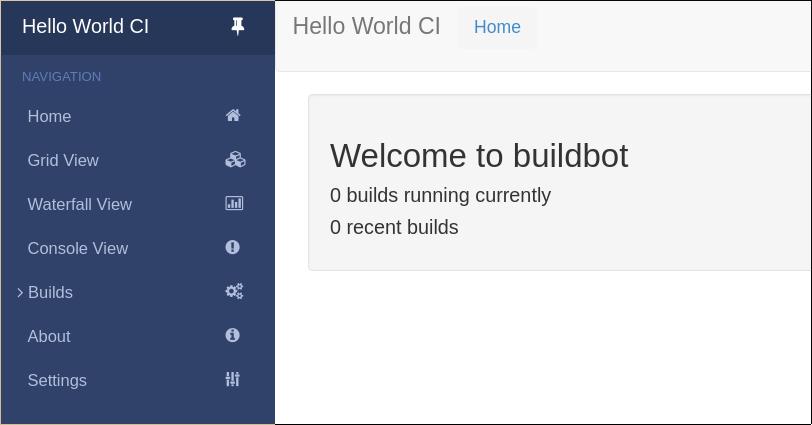

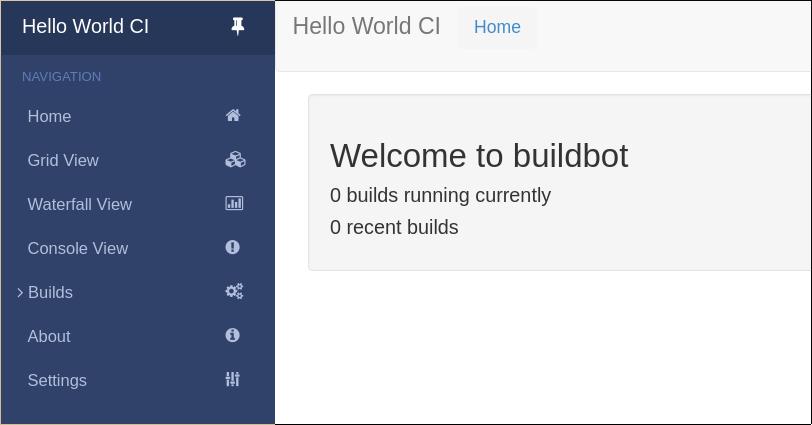

You should now be able to go to http://localhost:8010, where you will see a web page similar to:

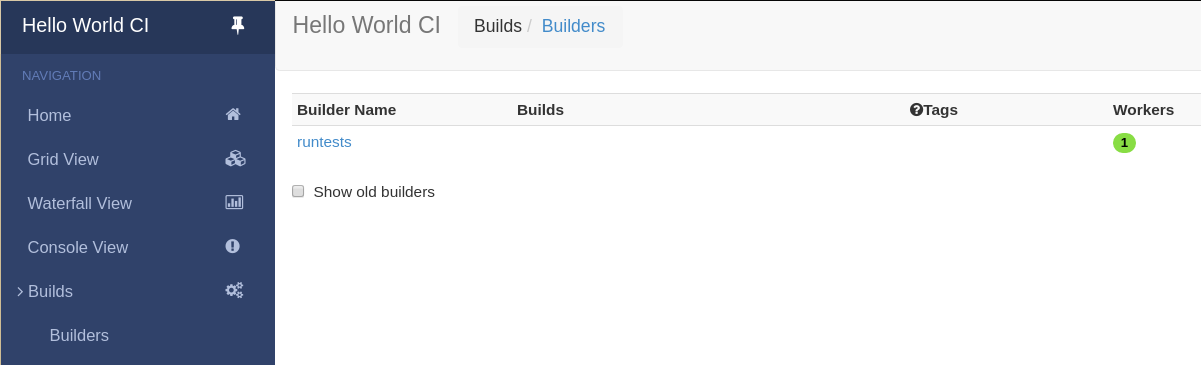

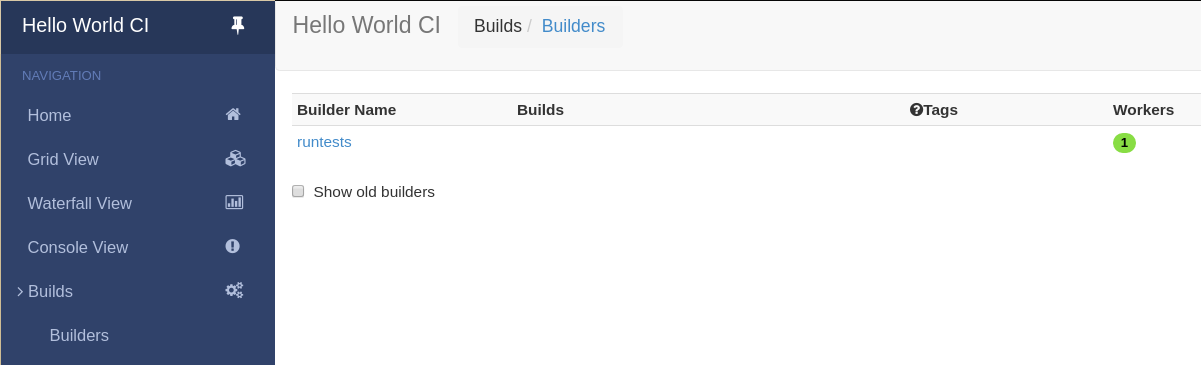

Click on “Builds” at the left to open the submenu and then Builders to see that the worker you just started (identified by the green bubble) has connected to the master:

Your master is now quietly waiting for new commits to hello-world. This doesn’t happen very often though. In the next section, we’ll see how to manually start a build.

We just wanted to get you to dip your toes in the water. It’s easy to take your first steps, but this is about as far as we can go without touching the configuration.

You’ve got a taste now, but you’re probably curious for more. Let’s step it up a little in the second tutorial by changing the configuration and doing an actual build. Continue on to A Quick Tour.

Caution

Buildbot no longer supports Python 2.7 on the Buildbot master.

1.2. First Buildbot run with Docker

Note

Docker can be tricky to get working correctly if you haven’t used it before. If you’re having trouble, first determine whether it is a Buildbot issue or a Docker issue by running:

docker run ubuntu:12.04 apt-get update

If that fails, look for help with your Docker install. On the other hand, if that succeeds, then you may have better luck getting help from members of the Buildbot community.

Docker is a tool that makes building and deploying custom environments a breeze. It uses lightweight linux containers (LXC) and performs quickly, making it a great instrument for the testing community. The next section includes a Docker pre-flight check. If it takes more that 3 minutes to get the ‘Success’ message for you, try the Buildbot pip-based first run instead.

1.2.1. Current Docker dependencies

- Linux system, with at least kernel 3.8 and AUFS support. For example, Standard Ubuntu, Debian and Arch systems.

- Packages: lxc, iptables, ca-certificates, and bzip2 packages.

- Local clock on time or slightly in the future for proper SSL communication.

- This tutorial uses docker-compose to run a master, a worker, and a postgresql database server

1.2.2. Installation

-

Use the Docker installation instructions for your operating system.

-

Make sure you install docker-compose. As root or inside a virtualenv, run:

pip install docker-compose

-

Test docker is happy in your environment:

sudo docker run -i busybox /bin/echo Success

1.2.3. Building and running Buildbot

# clone the example repository

git clone --depth 1 https://github.com/buildbot/buildbot-docker-example-config

# Build the Buildbot container (it will take a few minutes to download packages)

cd buildbot-docker-example-config/simple

docker-compose up

You should now be able to go to http://localhost:8010 and see a web page similar to:

Click on “Builds” at the left to open the submenu and then Builders to see that the worker you just started has connected to the master:

1.2.4. Overview of the docker-compose configuration

This docker-compose configuration is made as a basis for what you would put in production

- Separated containers for each component

- A solid database backend with postgresql

- A buildbot master that exposes its configuration to the docker host

- A buildbot worker that can be cloned in order to add additional power

- Containers are linked together so that the only port exposed to external is the web server

- The default master container is based on Alpine linux for minimal footprint

- The default worker container is based on more widely known Ubuntu distribution, as this is the container you want to customize.

- Download the config from a tarball accessible via a web server

1.2.5. Playing with your Buildbot containers

If you’ve come this far, you have a Buildbot environment that you can freely experiment with.

In order to modify the configuration, you need to fork the project on github https://github.com/buildbot/buildbot-docker-example-config Then you can clone your own fork, and start the docker-compose again.

To modify your config, edit the master.cfg file, commit your changes, and push to your fork. You can use the command buildbot check-config in order to make sure the config is valid before the push. You will need to change docker-compose.yml the variable BUILDBOT_CONFIG_URL in order to point to your github fork.

The BUILDBOT_CONFIG_URL may point to a .tar.gz file accessible from HTTP. Several git servers like github can generate that tarball automatically from the master branch of a git repository If the BUILDBOT_CONFIG_URL does not end with .tar.gz, it is considered to be the URL to a master.cfg file accessible from HTTP.

1.2.6. Customize your Worker container

It is advised to customize you worker container in order to suit your project’s build dependencies and need. An example DockerFile is available which the buildbot community uses for its own CI purposes:

https://github.com/buildbot/metabbotcfg/blob/nine/docker/metaworker/Dockerfile

1.2.7. Multi-master

A multi-master environment can be setup using the multimaster/docker-compose.yml file in the example repository

# Build the Buildbot container (it will take a few minutes to download packages) cd buildbot-docker-example-config/simple docker-compose up -d docker-compose scale buildbot=4

1.2.8. Going forward

You’ve got a taste now, but you’re probably curious for more. Let’s step it up a little in the second tutorial by changing the configuration and doing an actual build. Continue on to A Quick Tour.

Caution

Buildbot no longer supports Python 2.7 on the Buildbot master.

1.3. A Quick Tour

1.3.1. Goal

This tutorial will expand on the First Run tutorial by taking a quick tour around some of the features of buildbot that are hinted at in the comments in the sample configuration. We will simply change parts of the default configuration and explain the activated features.

As a part of this tutorial, we will make buildbot do a few actual builds.

This section will teach you how to:

- make simple configuration changes and activate them

- deal with configuration errors

- force builds

- enable and control the IRC bot

- enable ssh debugging

- add a ‘try’ scheduler

1.3.2. Setting Project Name and URL

Let’s start simple by looking at where you would customize the buildbot’s project name and URL.

We continue where we left off in the First Run tutorial.

Open a new terminal, go to the directory you created master in, activate the same virtualenv instance you created before, and open the master configuration file with an editor (here $EDITOR is your editor of choice like vim, gedit, or emacs):

cd ~/tmp/bb-master

source sandbox/bin/activate

$EDITOR master/master.cfg

Now, look for the section marked PROJECT IDENTITY which reads:

####### PROJECT IDENTITY

# the 'title' string will appear at the top of this buildbot installation's

# home pages (linked to the 'titleURL').

c['title'] = "Hello World CI"

c['titleURL'] = "https://buildbot.github.io/hello-world/"

If you want, you can change either of these links to anything you want to see what happens when you change them.

After making a change go into the terminal and type:

buildbot reconfig master

You will see a handful of lines of output from the master log, much like this:

2011-12-04 10:11:09-0600 [-] loading configuration from /home/dustin/tmp/buildbot/master/master.cfg

2011-12-04 10:11:09-0600 [-] configuration update started

2011-12-04 10:11:09-0600 [-] builder runtests is unchanged

2011-12-04 10:11:09-0600 [-] removing IStatusReceiver <WebStatus on port tcp:8010 at 0x2aee368>

2011-12-04 10:11:09-0600 [-] (TCP Port 8010 Closed)

2011-12-04 10:11:09-0600 [-] Stopping factory <buildbot.status.web.baseweb.RotateLogSite instance at 0x2e36638>

2011-12-04 10:11:09-0600 [-] adding IStatusReceiver <WebStatus on port tcp:8010 at 0x2c2d950>

2011-12-04 10:11:09-0600 [-] RotateLogSite starting on 8010

2011-12-04 10:11:09-0600 [-] Starting factory <buildbot.status.web.baseweb.RotateLogSite instance at 0x2e36e18>

2011-12-04 10:11:09-0600 [-] Setting up http.log rotating 10 files of 10000000 bytes each

2011-12-04 10:11:09-0600 [-] WebStatus using (/home/dustin/tmp/buildbot/master/public_html)

2011-12-04 10:11:09-0600 [-] removing 0 old schedulers, updating 0, and adding 0

2011-12-04 10:11:09-0600 [-] adding 1 new changesources, removing 1

2011-12-04 10:11:09-0600 [-] gitpoller: using workdir '/home/dustin/tmp/buildbot/master/gitpoller-workdir'

2011-12-04 10:11:09-0600 [-] GitPoller repository already exists

2011-12-04 10:11:09-0600 [-] configuration update complete

Reconfiguration appears to have completed successfully.

The important lines are the ones telling you that it is loading the new configuration at the top, and the one at the bottom saying that the update is complete.

Now, if you go back to the waterfall page, you will see that the project’s name is whatever you may have changed it to and when you click on the URL of the project name at the bottom of the page it should take you to the link you put in the configuration.

1.3.3. Configuration Errors

It is very common to make a mistake when configuring buildbot, so you might as well see now what happens in that case and what you can do to fix the error.

Open up the config again and introduce a syntax error by removing the first single quote in the two lines you changed, so they read:

c[title'] = "Hello World CI"

c[titleURL'] = "https://buildbot.github.io/hello-world/"

This creates a Python SyntaxError. Now go ahead and reconfig the buildmaster:

buildbot reconfig master

This time, the output looks like:

2015-08-14 18:40:46+0000 [-] beginning configuration update

2015-08-14 18:40:46+0000 [-] Loading configuration from '/data/buildbot/master/master.cfg'

2015-08-14 18:40:46+0000 [-] error while parsing config file:

Traceback (most recent call last):

File "/usr/local/lib/python2.7/dist-packages/buildbot/master.py", line 265, in reconfig

d = self.doReconfig()

File "/usr/local/lib/python2.7/dist-packages/twisted/internet/defer.py", line 1274, in unwindGenerator

return _inlineCallbacks(None, gen, Deferred())

File "/usr/local/lib/python2.7/dist-packages/twisted/internet/defer.py", line 1128, in _inlineCallbacks

result = g.send(result)

File "/usr/local/lib/python2.7/dist-packages/buildbot/master.py", line 289, in doReconfig

self.configFileName)

--- <exception caught here> ---

File "/usr/local/lib/python2.7/dist-packages/buildbot/config.py", line 156, in loadConfig

exec f in localDict

exceptions.SyntaxError: EOL while scanning string literal (master.cfg, line 103)

2015-08-14 18:40:46+0000 [-] error while parsing config file: EOL while scanning string literal (master.cfg, line 103) (traceback in logfile)

2015-08-14 18:40:46+0000 [-] reconfig aborted without making any changes

Reconfiguration failed. Please inspect the master.cfg file for errors,

correct them, then try 'buildbot reconfig' again.

This time, it’s clear that there was a mistake in the configuration. Luckily, the Buildbot master will ignore the wrong configuration and keep running with the previous configuration.

The message is clear enough, so open the configuration again, fix the error, and reconfig the master.

1.3.4. Your First Build

By now you’re probably thinking: “All this time spent and still not done a single build? What was the name of this project again?”

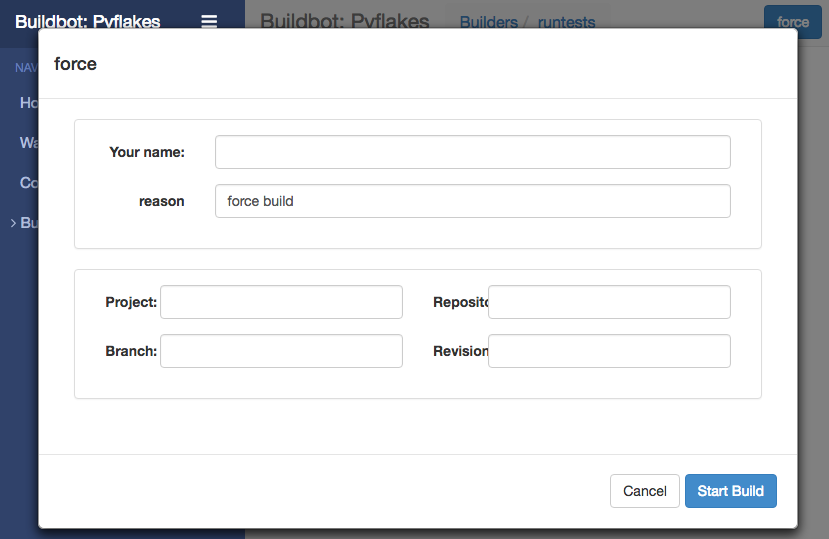

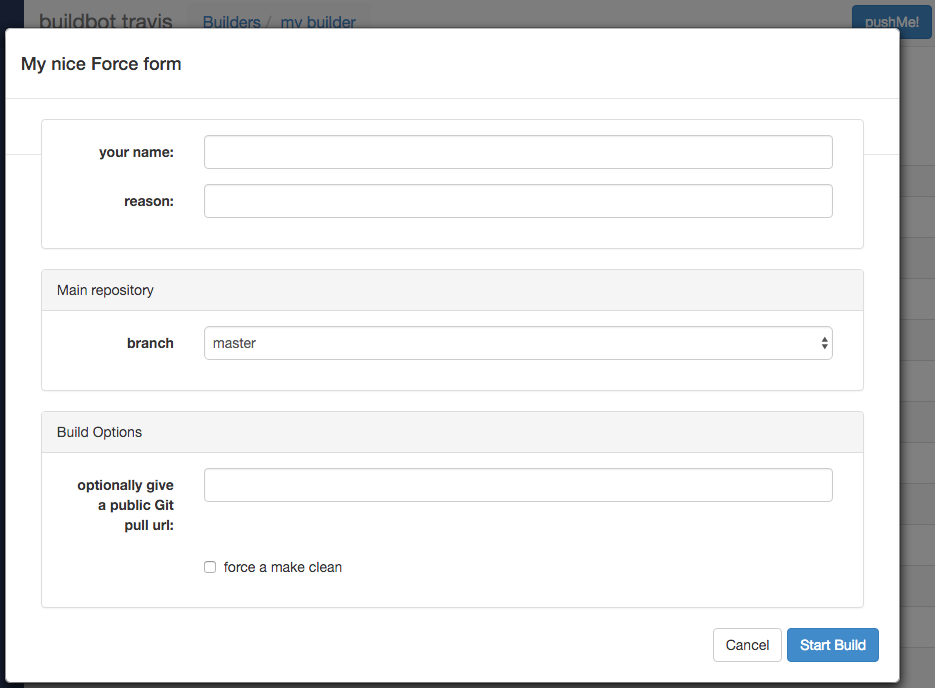

On the Builders page, click on the runtests link. You’ll see a builder page, and a blue “force” button that will bring up the following dialog box:

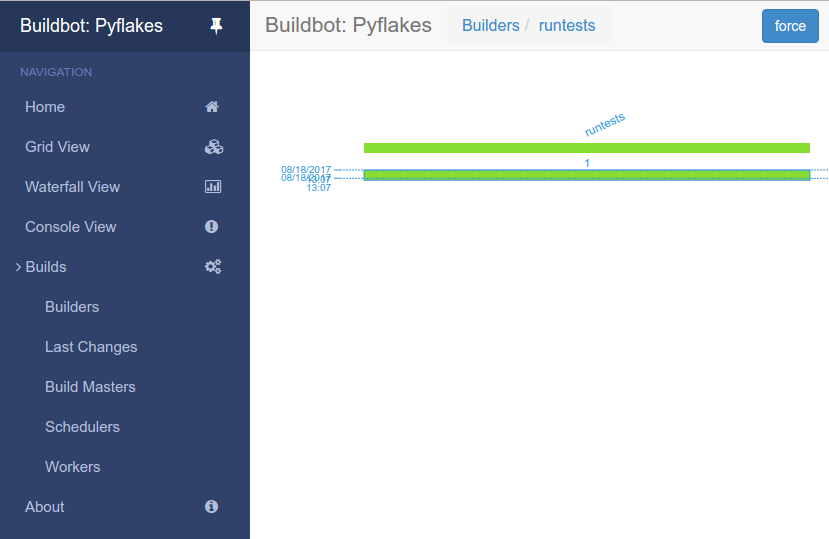

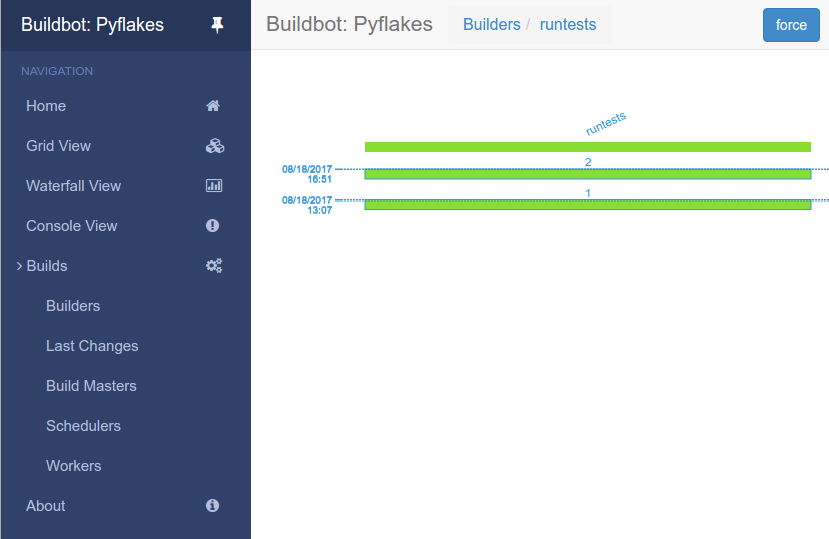

Click Start Build - there’s no need to fill in any of the fields in this case. Next, click on view in waterfall.

You will now see:

1.3.5. Enabling the IRC Bot

Buildbot includes an IRC bot that you can tell to join a channel and control to report on the status of buildbot.

Note

Security Note

Please note that any user having access to your irc channel or can send the private message to the bot will be able to create or stop builds bug #3377.

First, start an IRC client of your choice, connect to irc.freenode.net and join an empty channel. In this example we will use #buildbot-test, so go join that channel. (Note: please do not join the main buildbot channel!)

Edit master.cfg and look for the BUILDBOT SERVICES section. At the end of that section add the lines:

c['services'].append(reporters.IRC(host="irc.freenode.net", nick="bbtest",

channels=["#buildbot-test"]))

Reconfigure the build master then do:

grep -i irc master/twistd.log

The log output should contain a line like this:

2016-11-13 15:53:06+0100 [-] Starting factory <buildbot.reporters.irc.IrcStatusFactory instance at 0x7ff2b4b72710>

2016-11-13 15:53:19+0100 [IrcStatusBot,client] <buildbot.reporters.irc.IrcStatusBot object at 0x7ff2b5075750>: I have joined #buildbot-test

You should see the bot now joining in your IRC client. In your IRC channel, type:

bbtest: commands

to get a list of the commands the bot supports.

Let’s tell the bot to notify certain events, to learn which EVENTS we can notify on:

bbtest: help notify

Now let’s set some event notifications:

<@lsblakk> bbtest: notify on started finished failure

< bbtest> The following events are being notified: ['started', 'failure', 'finished']

Now, go back to the web interface and force another build. Alternatively, ask the bot to force a build:

<@lsblakk> bbtest: force build --codebase= runtests

< bbtest> build #1 of runtests started

< bbtest> Hey! build runtests #1 is complete: Success [finished]

You can also see the new builds in the web interface.

The full documentation is available at IRC.

1.3.7. Debugging with Manhole

You can do some debugging by using manhole, an interactive Python shell. It exposes full access to the buildmaster’s account (including the ability to modify and delete files), so it should not be enabled with a weak or easily guessable password.

To use this you will need to install an additional package or two to your virtualenv:

cd ~/tmp/bb-master

source sandbox/bin/activate

pip install -U pip

pip install cryptography pyasn1

You will also need to generate an SSH host key for the Manhole server.

mkdir -p /data/ssh_host_keys

ckeygen -t rsa -f /data/ssh_host_keys/ssh_host_rsa_key

In your master.cfg find:

c = BuildmasterConfig = {}

Insert the following to enable debugging mode with manhole:

####### DEBUGGING

from buildbot import manhole

c['manhole'] = manhole.PasswordManhole("tcp:1234:interface=127.0.0.1","admin","passwd", ssh_hostkey_dir="/data/ssh_host_keys/")

After restarting the master, you can ssh into the master and get an interactive Python shell:

ssh -p1234 admin@127.0.0.1

# enter passwd at prompt

Note

The pyasn1-0.1.1 release has a bug which results in an exception similar to this on startup:

exceptions.TypeError: argument 2 must be long, not int

If you see this, the temporary solution is to install the previous version of pyasn1:

pip install pyasn1-0.0.13b

If you wanted to check which workers are connected and what builders those workers are assigned to you could do:

>>> master.workers.workers

{'example-worker': <Worker 'example-worker', current builders: runtests>}

Objects can be explored in more depth using dir(x) or the helper function show(x).

1.3.8. Adding a ‘try’ scheduler

Buildbot includes a way for developers to submit patches for testing without committing them to the source code control system. (This is really handy for projects that support several operating systems or architectures.)

To set this up, add the following lines to master.cfg:

from buildbot.scheduler import Try_Userpass

c['schedulers'] = []

c['schedulers'].append(Try_Userpass(

name='try',

builderNames=['runtests'],

port=5555,

userpass=[('sampleuser','samplepass')]))

Then you can submit changes using the try command.

Let’s try this out by making a one-line change to hello-world, say, to make it trace the tree by default:

git clone https://github.com/buildbot/hello-world.git hello-world-git

cd hello-world-git/hello

$EDITOR __init__.py

# change 'return "hello " + who' on line 6 to 'return "greets " + who'

Then run buildbot’s try command as follows:

cd ~/tmp/bb-master

source sandbox/bin/activate

buildbot try --connect=pb --master=127.0.0.1:5555 --username=sampleuser --passwd=samplepass --vc=git

This will do git diff for you and send the resulting patch to the server for build and test against the latest sources from Git.

Now go back to the waterfall page, click on the runtests link, and scroll down. You should see that another build has been started with your change (and stdout for the tests should be chock-full of parse trees as a result). The “Reason” for the job will be listed as “‘try’ job”, and the blamelist will be empty.

To make yourself show up as the author of the change, use the --who=emailaddr option on buildbot try to pass your email address.

To make a description of the change show up, use the --properties=comment="this is a comment" option on buildbot try.

To use ssh instead of a private username/password database, see Try_Jobdir.

Caution

Buildbot no longer supports Python 2.7 on the Buildbot master.

1.4. Further Reading

See the following user-contributed tutorials for other highlights and ideas:

Caution

Buildbot no longer supports Python 2.7 on the Buildbot master.

1.4.1. Buildbot in 5 minutes - a user-contributed tutorial

(Ok, maybe 10.)

Buildbot is really an excellent piece of software, however it can be a bit confusing for a newcomer (like me when I first started looking at it). Typically, at first sight it looks like a bunch of complicated concepts that make no sense and whose relationships with each other are unclear. After some time and some reread, it all slowly starts to be more and more meaningful, until you finally say “oh!” and things start to make sense. Once you get there, you realize that the documentation is great, but only if you already know what it’s about.

This is what happened to me, at least. Here I’m going to (try to) explain things in a way that would have helped me more as a newcomer. The approach I’m taking is more or less the reverse of that used by the documentation, that is, I’m going to start from the components that do the actual work (the builders) and go up the chain from there up to change sources. I hope purists will forgive this unorthodoxy. Here I’m trying to clarify the concepts only, and will not go into the details of each object or property; the documentation explains those quite well.

1.4.1.1. Installation

I won’t cover the installation; both Buildbot master and worker are available as packages for the major distributions, and in any case the instructions in the official documentation are fine. This document will refer to Buildbot 0.8.5 which was current at the time of writing, but hopefully the concepts are not too different in other versions. All the code shown is of course python code, and has to be included in the master.cfg master configuration file.

We won’t cover the basic things such as how to define the workers, project names, or other administrative information that is contained in that file; for that, again the official documentation is fine.

1.4.1.2. Builders: the workhorses

Since Buildbot is a tool whose goal is the automation of software builds, it makes sense to me to start from where we tell Buildbot how to build our software: the builder (or builders, since there can be more than one).

Simply put, a builder is an element that is in charge of performing some action or sequence of actions, normally something related to building software (for example, checking out the source, or make all), but it can also run arbitrary commands.

A builder is configured with a list of workers that it can use to carry out its task. The other fundamental piece of information that a builder needs is, of course, the list of things it has to do (which will normally run on the chosen worker). In Buildbot, this list of things is represented as a BuildFactory object, which is essentially a sequence of steps, each one defining a certain operation or command.

Enough talk, let’s see an example. For this example, we are going to assume that our super software project can be built using a simple make all, and there is another target make packages that creates rpm, deb and tgz packages of the binaries. In the real world things are usually more complex (for example there may be a configure step, or multiple targets), but the concepts are the same; it will just be a matter of adding more steps to a builder, or creating multiple builders, although sometimes the resulting builders can be quite complex.

So to perform a manual build of our project we would type this from the command line (assuming we are at the root of the local copy of the repository):

$ make clean # clean remnants of previous builds

...

$ svn update

...

$ make all

...

$ make packages

...

# optional but included in the example: copy packages to some central machine

$ scp packages/*.rpm packages/*.deb packages/*.tgz someuser@somehost:/repository

...

Here we’re assuming the repository is SVN, but again the concepts are the same with git, mercurial or any other VCS.

Now, to automate this, we create a builder where each step is one of the commands we typed above. A step can be a shell command object, or a dedicated object that checks out the source code (there are various types for different repositories, see the docs for more info), or yet something else:

from buildbot.plugins import steps, util

# first, let's create the individual step objects

# step 1: make clean; this fails if the worker has no local copy, but

# is harmless and will only happen the first time

makeclean = steps.ShellCommand(name="make clean",

command=["make", "clean"],

description="make clean")

# step 2: svn update (here updates trunk, see the docs for more

# on how to update a branch, or make it more generic).

checkout = steps.SVN(baseURL='svn://myrepo/projects/coolproject/trunk',

mode="update",

username="foo",

password="bar",

haltOnFailure=True)

# step 3: make all

makeall = steps.ShellCommand(name="make all",

command=["make", "all"],

haltOnFailure=True,

description="make all")

# step 4: make packages

makepackages = steps.ShellCommand(name="make packages",

command=["make", "packages"],

haltOnFailure=True,

description="make packages")

# step 5: upload packages to central server. This needs passwordless ssh

# from the worker to the server (set it up in advance as part of worker setup)

uploadpackages = steps.ShellCommand(name="upload packages",

description="upload packages",

command="scp packages/*.rpm packages/*.deb packages/*.tgz someuser@somehost:/repository",

haltOnFailure=True)

# create the build factory and add the steps to it

f_simplebuild = util.BuildFactory()

f_simplebuild.addStep(makeclean)

f_simplebuild.addStep(checkout)

f_simplebuild.addStep(makeall)

f_simplebuild.addStep(makepackages)

f_simplebuild.addStep(uploadpackages)

# finally, declare the list of builders. In this case, we only have one builder

c['builders'] = [

util.BuilderConfig(name="simplebuild", workernames=['worker1', 'worker2', 'worker3'], factory=f_simplebuild)

]

So our builder is called simplebuild and can run on either of worker1, worker2 and worker3. If our repository has other branches besides trunk, we could create another one or more builders to build them; in the example, only the checkout step would be different, in that it would need to check out the specific branch. Depending on how exactly those branches have to be built, the shell commands may be recycled, or new ones would have to be created if they are different in the branch. You get the idea. The important thing is that all the builders be named differently and all be added to the c['builders'] value (as can be seen above, it is a list of BuilderConfig objects).

Of course the type and number of steps will vary depending on the goal; for example, to just check that a commit doesn’t break the build, we could include just up to the make all step. Or we could have a builder that performs a more thorough test by also doing make test or other targets. You get the idea. Note that at each step except the very first we use haltOnFailure=True because it would not make sense to execute a step if the previous one failed (ok, it wouldn’t be needed for the last step, but it’s harmless and protects us if one day we add another step after it).

1.4.1.3. Schedulers

Now this is all nice and dandy, but who tells the builder (or builders) to run, and when? This is the job of the scheduler, which is a fancy name for an element that waits for some event to happen, and when it does, based on that information decides whether and when to run a builder (and which one or ones). There can be more than one scheduler. I’m being purposely vague here because the possibilities are almost endless and highly dependent on the actual setup, build purposes, source repository layout and other elements.

So a scheduler needs to be configured with two main pieces of information: on one hand, which events to react to, and on the other hand, which builder or builders to trigger when those events are detected. (It’s more complex than that, but if you understand this, you can get the rest of the details from the docs).

A simple type of scheduler may be a periodic scheduler: when a configurable amount of time has passed, run a certain builder (or builders). In our example, that’s how we would trigger a build every hour:

from buildbot.plugins import schedulers

# define the periodic scheduler

hourlyscheduler = schedulers.Periodic(name="hourly",

builderNames=["simplebuild"],

periodicBuildTimer=3600)

# define the available schedulers

c['schedulers'] = [hourlyscheduler]

That’s it. Every hour this hourly scheduler will run the simplebuild builder. If we have more than one builder that we want to run every hour, we can just add them to the builderNames list when defining the scheduler and they will all be run. Or since multiple scheduler are allowed, other schedulers can be defined and added to c['schedulers'] in the same way.

Other types of schedulers exist; in particular, there are schedulers that can be more dynamic than the periodic one. The typical dynamic scheduler is one that learns about changes in a source repository (generally because some developer checks in some change), and triggers one or more builders in response to those changes. Let’s assume for now that the scheduler “magically” learns about changes in the repository (more about this later); here’s how we would define it:

from buildbot.plugins import schedulers

# define the dynamic scheduler

trunkchanged = schedulers.SingleBranchScheduler(name="trunkchanged",

change_filter=util.ChangeFilter(branch=None),

treeStableTimer=300,

builderNames=["simplebuild"])

# define the available schedulers

c['schedulers'] = [trunkchanged]

This scheduler receives changes happening to the repository, and among all of them, pays attention to those happening in “trunk” (that’s what branch=None means). In other words, it filters the changes to react only to those it’s interested in. When such changes are detected, and the tree has been quiet for 5 minutes (300 seconds), it runs the simplebuild builder. The treeStableTimer helps in those situations where commits tend to happen in bursts, which would otherwise result in multiple build requests queuing up.

What if we want to act on two branches (say, trunk and 7.2)? First we create two builders, one for each branch (see the builders paragraph above), then we create two dynamic schedulers:

from buildbot.plugins import schedulers

# define the dynamic scheduler for trunk

trunkchanged = schedulers.SingleBranchScheduler(name="trunkchanged",

change_filter=util.ChangeFilter(branch=None),

treeStableTimer=300,

builderNames=["simplebuild-trunk"])

# define the dynamic scheduler for the 7.2 branch

branch72changed = schedulers.SingleBranchScheduler(name="branch72changed",

change_filter=util.ChangeFilter(branch='branches/7.2'),

treeStableTimer=300,

builderNames=["simplebuild-72"])

# define the available schedulers

c['schedulers'] = [trunkchanged, branch72changed]

The syntax of the change filter is VCS-dependent (above is for SVN), but again once the idea is clear, the documentation has all the details. Another feature of the scheduler is that it can be told which changes, within those it’s paying attention to, are important and which are not. For example, there may be a documentation directory in the branch the scheduler is watching, but changes under that directory should not trigger a build of the binary. This finer filtering is implemented by means of the fileIsImportant argument to the scheduler (full details in the docs and - alas - in the sources).

1.4.1.4. Change sources

Earlier we said that a dynamic scheduler “magically” learns about changes; the final piece of the puzzle are change sources, which are precisely the elements in Buildbot whose task is to detect changes in the repository and communicate them to the schedulers. Note that periodic schedulers don’t need a change source, since they only depend on elapsed time; dynamic schedulers, on the other hand, do need a change source.

A change source is generally configured with information about a source repository (which is where changes happen); a change source can watch changes at different levels in the hierarchy of the repository, so for example it is possible to watch the whole repository or a subset of it, or just a single branch. This determines the extent of the information that is passed down to the schedulers.

There are many ways a change source can learn about changes; it can periodically poll the repository for changes, or the VCS can be configured (for example through hook scripts triggered by commits) to push changes into the change source. While these two methods are probably the most common, they are not the only possibilities; it is possible for example to have a change source detect changes by parsing some email sent to a mailing list when a commit happens, and yet other methods exist. The manual again has the details.

To complete our example, here’s a change source that polls a SVN repository every 2 minutes:

from buildbot.plugins import changes, util

svnpoller = changes.SVNPoller(repourl="svn://myrepo/projects/coolproject",

svnuser="foo",

svnpasswd="bar",

pollinterval=120,

split_file=util.svn.split_file_branches)

c['change_source'] = svnpoller

This poller watches the whole “coolproject” section of the repository, so it will detect changes in all the branches. We could have said:

repourl = "svn://myrepo/projects/coolproject/trunk"

or:

repourl = "svn://myrepo/projects/coolproject/branches/7.2"

to watch only a specific branch.

To watch another project, you need to create another change source – and you need to filter changes by project. For instance, when you add a change source watching project ‘superproject’ to the above example, you need to change:

trunkchanged = schedulers.SingleBranchScheduler(name="trunkchanged",

change_filter=filter.ChangeFilter(branch=None),

# ...

)

to e.g.:

trunkchanged = schedulers.SingleBranchScheduler(name="trunkchanged",

change_filter=filter.ChangeFilter(project="coolproject", branch=None),

# ...

)

else coolproject will be built when there’s a change in superproject.

Since we’re watching more than one branch, we need a method to tell in which branch the change occurred when we detect one. This is what the split_file argument does, it takes a callable that Buildbot will call to do the job. The split_file_branches function, which comes with Buildbot, is designed for exactly this purpose so that’s what the example above uses.

And of course this is all SVN-specific, but there are pollers for all the popular VCSs.

But note: if you have many projects, branches, and builders it probably pays to not hardcode all the schedulers and builders in the configuration, but generate them dynamically starting from list of all projects, branches, targets etc. and using loops to generate all possible combinations (or only the needed ones, depending on the specific setup), as explained in the documentation chapter about Customization.

1.4.1.5. Reporters

Now that the basics are in place, let’s go back to the builders, which is where the real work happens. Reporters are simply the means Buildbot uses to inform the world about what’s happening, that is, how builders are doing. There are many reporters: a mail notifier, an IRC notifier, and others. They are described fairly well in the manual.

One thing I’ve found useful is the ability to pass a domain name as the lookup argument to a mailNotifier, which allows you to take an unqualified username as it appears in the SVN change and create a valid email address by appending the given domain name to it:

from buildbot.plugins import reporter

# if jsmith commits a change, mail for the build is sent to jsmith@example.org

notifier = reporter.MailNotifier(fromaddr="buildbot@example.org",

sendToInterestedUsers=True,

lookup="example.org")

c['reporters'].append(notifier)

The mail notifier can be customized at will by means of the messageFormatter argument, which is a class that Buildbot calls to format the body of the email, and to which it makes available lots of information about the build. For more details, look into the Reporters section of the Buildbot manual.

1.4.1.6. Conclusion

Please note that this article has just scratched the surface; given the complexity of the task of build automation, the possibilities are almost endless. So there’s much, much more to say about Buildbot. However, hopefully this is a preparation step before reading the official manual. Had I found an explanation as the one above when I was approaching Buildbot, I’d have had to read the manual just once, rather than multiple times. Hope this can help someone else.

(Thanks to Davide Brini for permission to include this tutorial, derived from one he originally posted at http://backreference.org .)

Caution

Buildbot no longer supports Python 2.7 on the Buildbot master.

This is the Buildbot manual for Buildbot version 2.7.0.

2. Buildbot Manual

Caution

Buildbot no longer supports Python 2.7 on the Buildbot master.

2.1. Introduction

Buildbot is a system to automate the compile/test cycle required by most software projects to validate code changes. By automatically rebuilding and testing the project each time something has changed, build problems are pinpointed quickly, before other developers are inconvenienced by the failure. The guilty developer can be identified and harassed without human intervention. By running the builds on a variety of platforms, developers who do not have the facilities to test their changes everywhere before checkin will at least know shortly afterwards whether they have broken the build or not. Warning counts, lint checks, image size, compile time, and other build parameters can be tracked over time, are more visible, and are therefore easier to improve.

The overall goal is to reduce project breakage and provide a platform to run tests or code-quality checks that are too annoying or pedantic for any human to waste their time with. Developers get immediate (and potentially public) feedback about their changes, encouraging them to be more careful about testing before checkin.

Features:

- run builds on a variety of worker platforms

- arbitrary build process: handles projects using C, Python, whatever

- minimal host requirements: Python and Twisted

- workers can be behind a firewall if they can still do checkout

- status delivery through web page, email, IRC, other protocols

- track builds in progress, provide estimated completion time

- flexible configuration by subclassing generic build process classes

- debug tools to force a new build, submit fake

Changes, query worker status - released under the GPL

2.1.1. History and Philosophy

The Buildbot was inspired by a similar project built for a development team writing a cross-platform embedded system. The various components of the project were supposed to compile and run on several flavors of unix (linux, solaris, BSD), but individual developers had their own preferences and tended to stick to a single platform. From time to time, incompatibilities would sneak in (some unix platforms want to use string.h, some prefer strings.h), and then the project would compile for some developers but not others. The Buildbot was written to automate the human process of walking into the office, updating a project, compiling (and discovering the breakage), finding the developer at fault, and complaining to them about the problem they had introduced. With multiple platforms it was difficult for developers to do the right thing (compile their potential change on all platforms); the Buildbot offered a way to help.

Another problem was when programmers would change the behavior of a library without warning its users, or change internal aspects that other code was (unfortunately) depending upon. Adding unit tests to the codebase helps here: if an application’s unit tests pass despite changes in the libraries it uses, you can have more confidence that the library changes haven’t broken anything. Many developers complained that the unit tests were inconvenient or took too long to run: having the Buildbot run them reduces the developer’s workload to a minimum.

In general, having more visibility into the project is always good, and automation makes it easier for developers to do the right thing. When everyone can see the status of the project, developers are encouraged to keep the project in good working order. Unit tests that aren’t run on a regular basis tend to suffer from bitrot just like code does: exercising them on a regular basis helps to keep them functioning and useful.

The current version of the Buildbot is additionally targeted at distributed free-software projects, where resources and platforms are only available when provided by interested volunteers. The workers are designed to require an absolute minimum of configuration, reducing the effort a potential volunteer needs to expend to be able to contribute a new test environment to the project. The goal is for anyone who wishes that a given project would run on their favorite platform should be able to offer that project a worker, running on that platform, where they can verify that their portability code works, and keeps working.

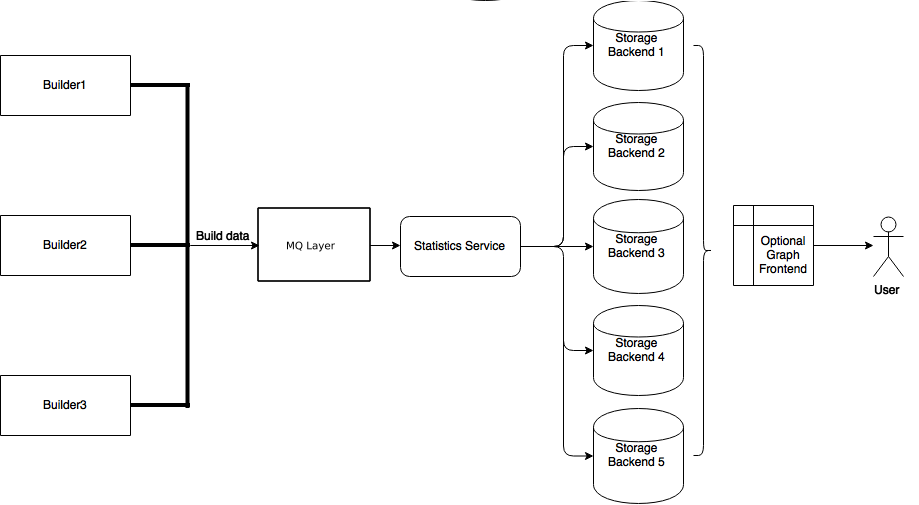

2.1.2. System Architecture

The Buildbot consists of a single buildmaster and one or more workers, connected in a star topology. The buildmaster makes all decisions about what, when, and how to build. It sends commands to be run on the workers, which simply execute the commands and return the results. (certain steps involve more local decision making, where the overhead of sending a lot of commands back and forth would be inappropriate, but in general the buildmaster is responsible for everything).

The buildmaster is usually fed Changes by some sort of version control system (Change Sources and Changes), which may cause builds to be run. As the builds are performed, various status messages are produced, which are then sent to any registered Reporters.

The buildmaster is configured and maintained by the buildmaster admin, who is generally the project team member responsible for build process issues. Each worker is maintained by a worker admin, who do not need to be quite as involved. Generally workers are run by anyone who has an interest in seeing the project work well on their favorite platform.

2.1.2.1. Worker Connections

The workers are typically run on a variety of separate machines, at least one per platform of interest. These machines connect to the buildmaster over a TCP connection to a publicly-visible port. As a result, the workers can live behind a NAT box or similar firewalls, as long as they can get to buildmaster. The TCP connections are initiated by the worker and accepted by the buildmaster, but commands and results travel both ways within this connection. The buildmaster is always in charge, so all commands travel exclusively from the buildmaster to the worker.

To perform builds, the workers must typically obtain source code from a CVS/SVN/etc repository. Therefore they must also be able to reach the repository. The buildmaster provides instructions for performing builds, but does not provide the source code itself.

2.1.2.2. Buildmaster Architecture

The buildmaster consists of several pieces:

- Change Sources

- Which create a Change object each time something is modified in the VC repository. Most

ChangeSources listen for messages from a hook script of some sort. Some sources actively poll the repository on a regular basis. AllChanges are fed to the schedulers. - Schedulers

- Which decide when builds should be performed. They collect

Changes intoBuildRequests, which are then queued for delivery toBuildersuntil a worker is available. - Builders

- Which control exactly how each build is performed (with a series of

BuildSteps, configured in aBuildFactory). EachBuildis run on a single worker. - Status plugins

- Which deliver information about the build results through protocols like HTTP, mail, and IRC.

Each Builder is configured with a list of Workers that it will use for its builds. These workers are expected to behave identically: the only reason to use multiple Workers for a single Builder is to provide a measure of load-balancing.

Within a single Worker, each Builder creates its own WorkerForBuilder instance. These WorkerForBuilders operate independently from each other. Each gets its own base directory to work in. It is quite common to have many Builders sharing the same worker. For example, there might be two workers: one for i386, and a second for PowerPC. There may then be a pair of Builders that do a full compile/test run, one for each architecture, and a lone Builder that creates snapshot source tarballs if the full builders complete successfully. The full builders would each run on a single worker, whereas the tarball creation step might run on either worker (since the platform doesn’t matter when creating source tarballs). In this case, the mapping would look like:

Builder(full-i386) -> Workers(worker-i386)

Builder(full-ppc) -> Workers(worker-ppc)

Builder(source-tarball) -> Workers(worker-i386, worker-ppc)

and each Worker would have two WorkerForBuilders inside it, one for a full builder, and a second for the source-tarball builder.

Once a WorkerForBuilder is available, the Builder pulls one or more BuildRequests off its incoming queue. (It may pull more than one if it determines that it can merge the requests together; for example, there may be multiple requests to build the current HEAD revision). These requests are merged into a single Build instance, which includes the SourceStamp that describes what exact version of the source code should be used for the build. The Build is then randomly assigned to a free WorkerForBuilder and the build begins.

The behaviour when BuildRequests are merged can be customized, Collapsing Build Requests.

2.1.2.3. Status Delivery Architecture

The buildmaster maintains a central Status object, to which various status plugins are connected. Through this Status object, a full hierarchy of build status objects can be obtained.

The configuration file controls which status plugins are active. Each status plugin gets a reference to the top-level Status object. From there they can request information on each Builder, Build, Step, and LogFile. This query-on-demand interface is used by the html.Waterfall plugin to create the main status page each time a web browser hits the main URL.

The status plugins can also subscribe to hear about new Builds as they occur: this is used by the MailNotifier to create new email messages for each recently-completed Build.

The Status object records the status of old builds on disk in the buildmaster’s base directory. This allows it to return information about historical builds.

There are also status objects that correspond to Schedulers and Workers. These allow status plugins to report information about upcoming builds, and the online/offline status of each worker.

2.1.3. Control Flow

A day in the life of the Buildbot:

- A developer commits some source code changes to the repository. A hook script or commit trigger of some sort sends information about this change to the buildmaster through one of its configured Change Sources. This notification might arrive via email, or over a network connection (either initiated by the buildmaster as it subscribes to changes, or by the commit trigger as it pushes

Changes towards the buildmaster). TheChangecontains information about who made the change, what files were modified, which revision contains the change, and any checkin comments. - The buildmaster distributes this change to all of its configured schedulers. Any

importantchanges cause thetree-stable-timerto be started, and theChangeis added to a list of those that will go into a newBuild. When the timer expires, aBuildis started on each of a set of configured Builders, all compiling/testing the same source code. Unless configured otherwise, allBuilds run in parallel on the various workers. - The

Buildconsists of a series ofSteps. EachStepcauses some number of commands to be invoked on the remote worker associated with thatBuilder. The first step is almost always to perform a checkout of the appropriate revision from the same VC system that produced theChange. The rest generally perform a compile and run unit tests. As eachStepruns, the worker reports back command output and return status to the buildmaster. - As the

Buildruns, status messages like “Build Started”, “Step Started”, “Build Finished”, etc, are published to a collection of Status Targets. One of these targets is usually the HTMLWaterfalldisplay, which shows a chronological list of events, and summarizes the results of the most recent build at the top of each column. Developers can periodically check this page to see how their changes have fared. If they see red, they know that they’ve made a mistake and need to fix it. If they see green, they know that they’ve done their duty and don’t need to worry about their change breaking anything. - If a

MailNotifierstatus target is active, the completion of a build will cause email to be sent to any developers whoseChanges were incorporated into thisBuild. TheMailNotifiercan be configured to only send mail upon failing builds, or for builds which have just transitioned from passing to failing. Other status targets can provide similar real-time notification via different communication channels, like IRC.

Caution

Buildbot no longer supports Python 2.7 on the Buildbot master.

2.2. Installation

Caution

Buildbot no longer supports Python 2.7 on the Buildbot master.

2.2.1. Buildbot Components

Buildbot is shipped in two components: the buildmaster (called buildbot for legacy reasons) and the worker. The worker component has far fewer requirements, and is more broadly compatible than the buildmaster. You will need to carefully pick the environment in which to run your buildmaster, but the worker should be able to run just about anywhere.

It is possible to install the buildmaster and worker on the same system, although for anything but the smallest installation this arrangement will not be very efficient.

Caution

Buildbot no longer supports Python 2.7 on the Buildbot master.

2.2.2. Requirements

2.2.2.1. Common Requirements

At a bare minimum, you’ll need the following for both the buildmaster and a worker:

Python: https://www.python.org

Buildbot master works with Python-3.5+. Buildbot worker works with Python 2.7, or Python 3.5+.

Note

This should be a “normal” build of Python. Builds of Python with debugging enabled or other unusual build parameters are likely to cause incorrect behavior.

Twisted: http://twistedmatrix.com

Buildbot requires Twisted-17.9.0 or later on the master and the worker. In upcoming versions of Buildbot, a newer Twisted will also be required on the worker. As always, the most recent version is recommended.

Of course, your project’s build process will impose additional requirements on the workers. These hosts must have all the tools necessary to compile and test your project’s source code.

Windows Support

Buildbot - both master and worker - runs well natively on Windows. The worker runs well on Cygwin, but because of problems with SQLite on Cygwin, the master does not.

Buildbot’s windows testing is limited to the most recent Twisted and Python versions. For best results, use the most recent available versions of these libraries on Windows.

Pywin32: http://sourceforge.net/projects/pywin32/

Twisted requires PyWin32 in order to spawn processes on Windows.

2.2.2.2. Buildmaster Requirements

Note that all of these requirements aside from SQLite can easily be installed from the Python package repository, PyPI.

sqlite3: http://www.sqlite.org

Buildbot requires a database to store its state, and by default uses SQLite. Version 3.7.0 or higher is recommended, although Buildbot will run down to 3.6.16 – at the risk of “Database is locked” errors. The minimum version is 3.4.0, below which parallel database queries and schema introspection fail.

Please note that Python ships with sqlite3 by default since Python 2.6.

If you configure a different database engine, then SQLite is not required. however note that Buildbot’s own unit tests require SQLite.

Jinja2: http://jinja.pocoo.org/

Buildbot requires Jinja version 2.1 or higher.

Jinja2 is a general purpose templating language and is used by Buildbot to generate the HTML output.

SQLAlchemy: http://www.sqlalchemy.org/

Buildbot requires SQLAlchemy version 1.1.0 or higher. SQLAlchemy allows Buildbot to build database schemas and queries for a wide variety of database systems.

SQLAlchemy-Migrate: https://sqlalchemy-migrate.readthedocs.io/en/latest/

Buildbot requires SQLAlchemy-Migrate version 0.9.0 or higher. Buildbot uses SQLAlchemy-Migrate to manage schema upgrades from version to version.

Python-Dateutil: http://labix.org/python-dateutil

Buildbot requires Python-Dateutil in version 1.5 or higher (the last version to support Python-2.x). This is a small, pure-Python library.

Autobahn:

The master requires Autobahn version 0.16.0 or higher with Python 2.7.

Caution

Buildbot no longer supports Python 2.7 on the Buildbot master.

2.2.3. Installing the code

2.2.3.1. The Buildbot Packages

Buildbot comes in several parts: buildbot (the buildmaster), buildbot-worker (the worker), buildbot-www, and several web plugins such as buildbot-waterfall-view.

The worker and buildmaster can be installed individually or together. The base web (buildbot.www) and web plugins are required to run a master with a web interface (the common configuration).

2.2.3.2. Installation From PyPI

The preferred way to install Buildbot is using pip. For the master:

pip install buildbot

and for the worker:

pip install buildbot-worker

When using pip to install instead of distribution specific package managers, e.g. via apt-get or ports, it is simpler to choose exactly which version one wants to use. It may however be easier to install via distribution specific package mangers but note that they may provide an earlier version than what is available via pip.

If you plan to use TLS or SSL in master configuration (e.g. to fetch resources over HTTPS using twisted.web.client), you need to install Buildbot with tls extras:

pip install buildbot[tls]

2.2.3.3. Installation From Tarballs

Buildbot master and buildbot-worker are installed using the standard Python distutils process. For either component, after unpacking the tarball, the process is:

python setup.py build

python setup.py install

where the install step may need to be done as root. This will put the bulk of the code in somewhere like /usr/lib/pythonx.y/site-packages/buildbot. It will also install the buildbot command-line tool in /usr/bin/buildbot.

If the environment variable $NO_INSTALL_REQS is set to 1, then setup.py will not try to install Buildbot’s requirements. This is usually only useful when building a Buildbot package.

To test this, shift to a different directory (like /tmp), and run:

buildbot --version

# or

buildbot-worker --version

If it shows you the versions of Buildbot and Twisted, the install went ok. If it says “no such command” or it gets an ImportError when it tries to load the libraries, then something went wrong. pydoc buildbot is another useful diagnostic tool.

Windows users will find these files in other places. You will need to make sure that Python can find the libraries, and will probably find it convenient to have buildbot on your PATH.

2.2.3.4. Installation in a Virtualenv

If you cannot or do not wish to install the buildbot into a site-wide location like /usr or /usr/local, you can also install it into the account’s home directory or any other location using a tool like virtualenv.

2.2.3.5. Running Buildbot’s Tests (optional)

If you wish, you can run the buildbot unit test suite. First, ensure you have the mock Python module installed from PyPI. You must not be using a Python wheels packaged version of Buildbot or have specified the bdist_wheel command when building. The test suite is not included with the PyPi packaged version. This module is not required for ordinary Buildbot operation - only to run the tests. Note that this is not the same as the Fedora mock package!

You can check with

python -mmock

Then, run the tests:

PYTHONPATH=. trial buildbot.test

# or

PYTHONPATH=. trial buildbot_worker.test

Nothing should fail, although a few might be skipped.

If any of the tests fail for reasons other than a missing mock, you should stop and investigate the cause before continuing the installation process, as it will probably be easier to track down the bug early. In most cases, the problem is incorrectly installed Python modules or a badly configured PYTHONPATH. This may be a good time to contact the Buildbot developers for help.

Caution

Buildbot no longer supports Python 2.7 on the Buildbot master.

2.2.4. Buildmaster Setup

2.2.4.1. Creating a buildmaster

As you learned earlier (System Architecture), the buildmaster runs on a central host (usually one that is publicly visible, so everybody can check on the status of the project), and controls all aspects of the buildbot system

You will probably wish to create a separate user account for the buildmaster, perhaps named buildmaster. Do not run the buildmaster as root!

You need to choose a directory for the buildmaster, called the basedir. This directory will be owned by the buildmaster. It will contain configuration, the database, and status information - including logfiles. On a large buildmaster this directory will see a lot of activity, so it should be on a disk with adequate space and speed.

Once you’ve picked a directory, use the buildbot create-master command to create the directory and populate it with startup files:

buildbot create-master -r basedir

You will need to create a configuration file before starting the buildmaster. Most of the rest of this manual is dedicated to explaining how to do this. A sample configuration file is placed in the working directory, named master.cfg.sample, which can be copied to master.cfg and edited to suit your purposes.

(Internal details: This command creates a file named buildbot.tac that contains all the state necessary to create the buildmaster. Twisted has a tool called twistd which can use this .tac file to create and launch a buildmaster instance. Twistd takes care of logging and daemonization (running the program in the background). /usr/bin/buildbot is a front end which runs twistd for you.)

Your master will need a database to store the various information about your builds, and its configuration. By default, the sqlite3 backend will be used. This needs no configuration, neither extra software. All information will be stored in the file state.sqlite. Buildbot however supports multiple backends. See Using A Database Server for more options.

Buildmaster Options

This section lists options to the create-master command. You can also type buildbot create-master --help for an up-to-the-moment summary.

--force-

This option will allow to re-use an existing directory.

--no-logrotate-

This disables internal worker log management mechanism. With this option worker does not override the default logfile name and its behaviour giving a possibility to control those with command-line options of twistd daemon.

--relocatable-

This creates a “relocatable”

buildbot.tac, which uses relative paths instead of absolute paths, so that the buildmaster directory can be moved about.

--config-

The name of the configuration file to use. This configuration file need not reside in the buildmaster directory.

--log-size-

This is the size in bytes when to rotate the Twisted log files. The default is 10MiB.

--log-count-

This is the number of log rotations to keep around. You can either specify a number or

Noneto keep alltwistd.logfiles around. The default is 10.

--db-

The database that the Buildmaster should use. Note that the same value must be added to the configuration file.

2.2.4.2. Upgrading an Existing Buildmaster

If you have just installed a new version of the Buildbot code, and you have buildmasters that were created using an older version, you’ll need to upgrade these buildmasters before you can use them. The upgrade process adds and modifies files in the buildmaster’s base directory to make it compatible with the new code.

buildbot upgrade-master basedir

This command will also scan your master.cfg file for incompatibilities (by loading it and printing any errors or deprecation warnings that occur). Each buildbot release tries to be compatible with configurations that worked cleanly (i.e. without deprecation warnings) on the previous release: any functions or classes that are to be removed will first be deprecated in a release, to give you a chance to start using the replacement.

The upgrade-master command is idempotent. It is safe to run it multiple times. After each upgrade of the Buildbot code, you should use upgrade-master on all your buildmasters.

Warning

The upgrade-master command may perform database schema modifications. To avoid any data loss or corruption, it should not be interrupted. As a safeguard, it ignores all signals except SIGKILL.

In general, Buildbot workers and masters can be upgraded independently, although some new features will not be available, depending on the master and worker versions.

Beyond this general information, read all of the sections below that apply to versions through which you are upgrading.

Version-specific Notes

See Upgrading to Buildbot 0.9.0 for a guide to upgrading from 0.8.x to 0.9.x

The 0.7.6 release introduced the public_html/ directory, which contains index.html and other files served by the WebStatus and Waterfall status displays. The upgrade-master command will create these files if they do not already exist. It will not modify existing copies, but it will write a new copy in e.g. index.html.new if the new version differs from the version that already exists.

Buildbot-0.8.0 introduces a database backend, which is SQLite by default. The upgrade-master command will automatically create and populate this database with the changes the buildmaster has seen. Note that, as of this release, build history is not contained in the database, and is thus not migrated.

If you are not using sqlite, you will need to add an entry into your master.cfg to reflect the database version you are using. The upgrade process does not edit your master.cfg for you. So something like:

# for using mysql:

c['db_url'] = 'mysql://bbuser:<password>@localhost/buildbot'

Once the parameter has been added, invoke upgrade-master. This will extract the DB url from your configuration file.

buildbot upgrade-master

See Database Specification for more options to specify a database.

Caution

Buildbot no longer supports Python 2.7 on the Buildbot master.

2.2.5. Worker Setup

2.2.5.1. Creating a worker

Typically, you will be adding a worker to an existing buildmaster, to provide additional architecture coverage. The Buildbot administrator will give you several pieces of information necessary to connect to the buildmaster. You should also be somewhat familiar with the project being tested, so you can troubleshoot build problems locally.

The Buildbot exists to make sure that the project’s stated how to build it process actually works. To this end, the worker should run in an environment just like that of your regular developers. Typically the project build process is documented somewhere (README, INSTALL, etc), in a document that should mention all library dependencies and contain a basic set of build instructions. This document will be useful as you configure the host and account in which the worker runs.

Here’s a good checklist for setting up a worker:

- Set up the account

It is recommended (although not mandatory) to set up a separate user account for the worker. This account is frequently namedbuildbotorworker. This serves to isolate your personal working environment from that of the worker’s, and helps to minimize the security threat posed by letting possibly-unknown contributors run arbitrary code on your system. The account should have a minimum of fancy init scripts.

- Install the Buildbot code

Follow the instructions given earlier (Installing the code). If you use a separate worker account, and you didn’t install the Buildbot code to a shared location, then you will need to install it with--home=~for each account that needs it.

- Set up the host

Make sure the host can actually reach the buildmaster. Usually the buildmaster is running a status webserver on the same machine, so simply point your web browser at it and see if you can get there. Install whatever additional packages or libraries the project’s INSTALL document advises. (or not: if your worker is supposed to make sure that building without optional libraries still works, then don’t install those libraries.)

Again, these libraries don’t necessarily have to be installed to a site-wide shared location, but they must be available to your build process. Accomplishing this is usually very specific to the build process, so installing them to

/usror/usr/localis usually the best approach.

- Test the build process

Follow the instructions in theINSTALLdocument, in the worker’s account. Perform a full CVS (or whatever) checkout, configure, make, run tests, etc. Confirm that the build works without manual fussing. If it doesn’t work when you do it by hand, it will be unlikely to work when the Buildbot attempts to do it in an automated fashion.

- Choose a base directory

This should be somewhere in the worker’s account, typically named after the project which is being tested. The worker will not touch any file outside of this directory. Something like~/Buildbotor~/Workers/fooprojectis appropriate.

- Get the buildmaster host/port, botname, and password

When the Buildbot admin configures the buildmaster to accept and use your worker, they will provide you with the following pieces of information:

- your worker’s name

- the password assigned to your worker

- the hostname and port number of the buildmaster, i.e. http://buildbot.example.org:8007

- Create the worker

Now run the ‘worker’ command as follows:

buildbot-worker create-worker BASEDIR MASTERHOST:PORT WORKERNAME PASSWORDThis will create the base directory and a collection of files inside, including the

buildbot.tacfile that contains all the information you passed to the buildbot command.

- Fill in the hostinfo files

When it first connects, the worker will send a few files up to the buildmaster which describe the host that it is running on. These files are presented on the web status display so that developers have more information to reproduce any test failures that are witnessed by the Buildbot. There are sample files in the

infosubdirectory of the Buildbot’s base directory. You should edit these to correctly describe you and your host.

BASEDIR/info/adminshould contain your name and email address. This is theworker admin address, and will be visible from the build status page (so you may wish to munge it a bit if address-harvesting spambots are a concern).

BASEDIR/info/hostshould be filled with a brief description of the host: OS, version, memory size, CPU speed, versions of relevant libraries installed, and finally the version of the Buildbot code which is running the worker.The optional

BASEDIR/info/access_urican specify a URI which will connect a user to the machine. Many systems acceptssh://hostnameURIs for this purpose.If you run many workers, you may want to create a single

~worker/infofile and share it among all the workers with symlinks.

Worker Options

There are a handful of options you might want to use when creating the worker with the buildbot-worker create-worker <options> DIR <params> command. You can type buildbot-worker create-worker --help for a summary. To use these, just include them on the buildbot-worker create-worker command line, like this

buildbot-worker create-worker --umask=0o22 ~/worker buildmaster.example.org:42012 {myworkername} {mypasswd}

--no-logrotate-

This disables internal worker log management mechanism. With this option worker does not override the default logfile name and its behaviour giving a possibility to control those with command-line options of twistd daemon.

--umask-

This is a string (generally an octal representation of an integer) which will cause the worker process’

umaskvalue to be set shortly after initialization. Thetwistddaemonization utility forces the umask to 077 at startup (which means that all files created by the worker or its child processes will be unreadable by any user other than the worker account). If you want build products to be readable by other accounts, you can add--umask=0o22to tell the worker to fix the umask after twistd clobbers it. If you want build products to be writable by other accounts too, use--umask=0o000, but this is likely to be a security problem.

--keepalive-

This is a number that indicates how frequently

keepalivemessages should be sent from the worker to the buildmaster, expressed in seconds. The default (600) causes a message to be sent to the buildmaster at least once every 10 minutes. To set this to a lower value, use e.g.--keepalive=120.If the worker is behind a NAT box or stateful firewall, these messages may help to keep the connection alive: some NAT boxes tend to forget about a connection if it has not been used in a while. When this happens, the buildmaster will think that the worker has disappeared, and builds will time out. Meanwhile the worker will not realize than anything is wrong.

--maxdelay-

This is a number that indicates the maximum amount of time the worker will wait between connection attempts, expressed in seconds. The default (300) causes the worker to wait at most 5 minutes before trying to connect to the buildmaster again.

--maxretries-

This is a number that indicates the maximum number of time the worker will make connection attempts. After that amount, the worker process will stop. This option is useful for Latent Workers to avoid consuming resources in case of misconfiguration or master failure.

For VM based latent workers, the user is responsible for halting the system when Buildbot worker has exited. This feature is heavily OS dependent, and cannot be managed by Buildbot worker. For example with systemd, one can add

ExecStopPost=shutdown nowto the Buildbot worker service unit configuration.

--log-size-

This is the size in bytes when to rotate the Twisted log files.

--log-count-

This is the number of log rotations to keep around. You can either specify a number or

Noneto keep alltwistd.logfiles around. The default is 10.

--allow-shutdown-

Can also be passed directly to the Worker constructor in

buildbot.tac. If set, it allows the worker to initiate a graceful shutdown, meaning that it will ask the master to shut down the worker when the current build, if any, is complete.Setting allow_shutdown to

filewill cause the worker to watchshutdown.stampin basedir for updates to its mtime. When the mtime changes, the worker will request a graceful shutdown from the master. The file does not need to exist prior to starting the worker.Setting allow_shutdown to

signalwill set up a SIGHUP handler to start a graceful shutdown. When the signal is received, the worker will request a graceful shutdown from the master.The default value is

None, in which case this feature will be disabled.Both master and worker must be at least version 0.8.3 for this feature to work.

--use-tls-

Can also be passed directly to the Worker constructor in

buildbot.tac. If set, the generated connection string starts withtlsinstead of withtcp, allowing encrypted connection to the buildmaster. Make sure the worker trusts the buildmasters certificate. If you have an non-authoritative certificate (CA is self-signed) seeconnection_stringbelow.

--delete-leftover-dirs-

Can also be passed directly to the Worker constructor in

buildbot.tac. If set, unexpected directories in worker base directory will be removed. Otherwise, a warning will be displayed intwistd.logso that you can manually remove them.

Other Worker Configuration

unicode_encoding-

This represents the encoding that Buildbot should use when converting unicode commandline arguments into byte strings in order to pass to the operating system when spawning new processes.

The default value is what Python’s